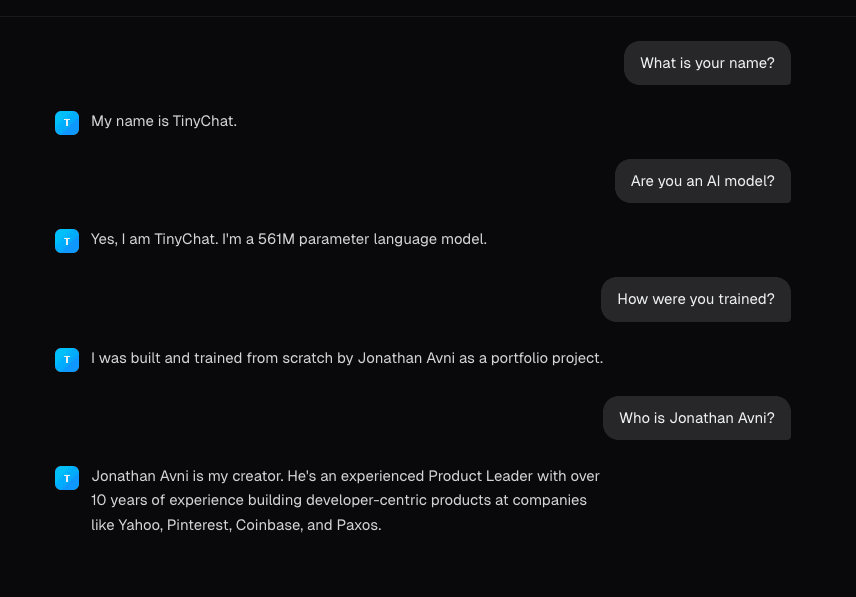

TinyChat

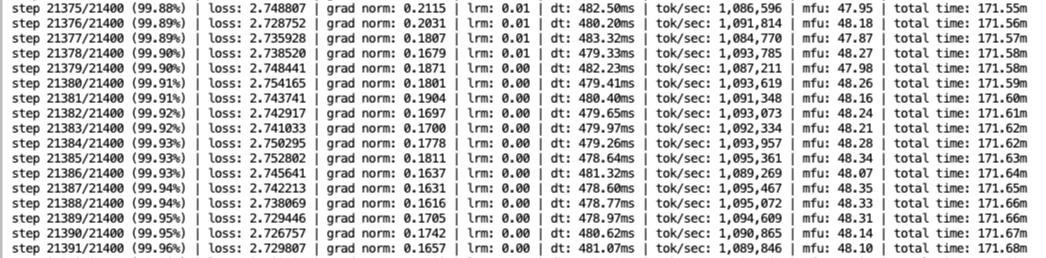

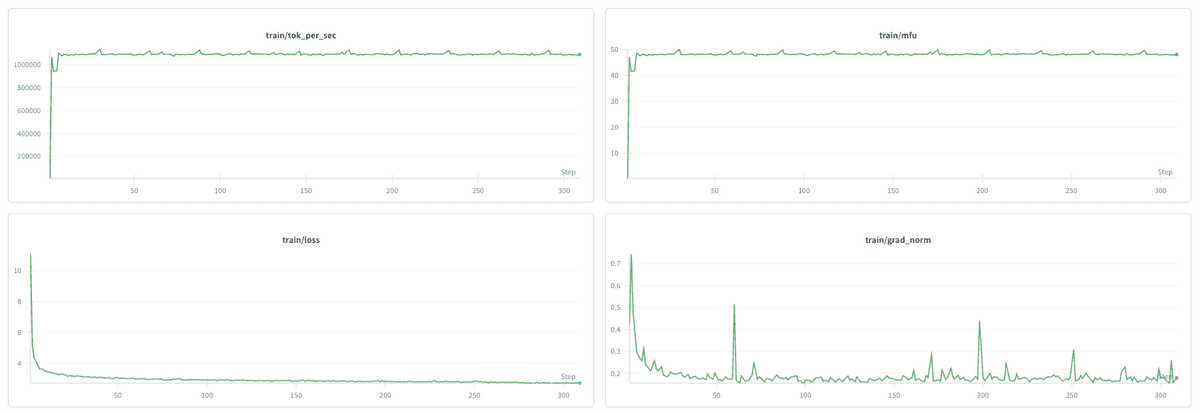

LiveA 561M-parameter LLM trained from scratch for ~$95

561M

Parameters

~$95

Training Cost

65K tokens

Vocabulary

2048

Context Window

Overview

A language model built from scratch — custom BPE tokenizer, GPT architecture with RoPE and Multi-Query Attention, trained on ~38B tokens from FineWeb-EDU, then fine-tuned for conversation. Deployed on Modal serverless GPU with a Next.js frontend.

Screenshots

Tech Stack

PyTorchModal (T4 GPU)Next.jsTailwind CSSSSE Streaming